I've told this story countless times, but I've never publicly documented it. This happened over a year ago, but I feel obligated to share it. nginx is the main reason for the success and deserves bragging rights.

We had an upcoming product release, and another team was handling all the communication, content, etc. I was simply just the "managed server" administrator type person. Helped setup nginx, PHP-FPM, configure packages on the server, stuff like that.

The other team managed the web applications and such. I had no clue what we would be in for that day.

There is a phrase, "too many eggs in one basket" - well, this was our first system and we were using it for many things, it hadn't gotten to the point where we needed to split it up or worry about scaling. Until this day, of course.

To me, the planning could have been a bit better, with the ISO image we were providing from the origin being staged on other mirrors, using torrents to offload traffic to P2P, etc. However, that wasn't done proactively, only reactively.

The story

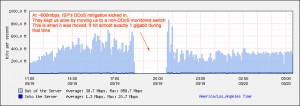

Launch day occurs. I get an email about someone unable to SSH to a specific IP. I check it out - the IP is unreachable, but the server is up. I submit a ticket to the provider, and they let me know why - apparently the server had triggered their DDoS mitigation system once it hit something like ~500mbit; it was automatically flagged as probably being under attack, and the IP was blackholed.

Once we informed them this was all legit, they instantly worked to put us onto a switch that was not DDoS protected, and we resumed taking traffic. This was all done within 15 or 20 minutes, if I recall. I've never seen anything so smoothly handled - no IP changes, completely transparent to us.

I believe the pocket of no traffic (seen in the graph below) was when we were moved off their normal network monitoring and into a Cisco Guard setup. We were definately caught off guard; like I said, I knew we'd get some traffic, but not filling the entire gigabit port. Not a lot of providers would be so flexible about this and handle it with such grace. There are some reports of it being slow, and that is literally because the server itself has too much going on. PHP is trying to handle all the Drupal traffic, and during the night, the disk I/O was at 100% for a long period of time. Oh yeah - since this was the origin, the servers mirroring us had to start pulling stuff from us too 🙂

Luckily we're billed by the gigabyte there, not by 95th like most places, or this would be one heck of a hosting bill. We wound up able to reroute the bandwidth fast enough to not even be charged ANY overage for it!

All in all, without nginx in the mix, I doubt this server would have been able to take the pounding. There was no reverse proxy cache, no Varnish, no Squid, nothing of that nature. I am not sure Drupal was even setup to use any memory caching, and I don't believe memcached was available. There were a LOT of options to reduce load - the main one was just cutting down the bandwidth usage to open up space in the pipe, which was eventually done by removing the ISO file off the origin server, and pushing it to a couple mirror sites. Things calmed down then.

However, it attests to how amazinly efficient nginx is - the entire experience wound up taking only 60 something megabytes of RAM for nginx.

Want the details? See below.

The hardware

- Intel Xeon 3220 (single CPU, quad-core)

- 4GB RAM

- single 7200RPM 250GB SATA disk, no RAID, no LVM, nothing

- Gigabit networking to the rack, with a 10GbE uplink

The software - to the best of my memory (remember, all on a single box!)

- nginx (probably 0.7.x at that point)

- proxying PHP requests to PHP-FPM with PHP 5.2.x (using the patch)

- Drupal 6.x - as far as I know, no advanced caching, no memcached, *possibly* APC

- proxying certain hosts for CGI requests to Apache 2.x (not sure if it was 2.2.x or 2.0.x)

- This server was also a yum repo for the project, serving through nginx

- PHP-FPM - for Drupal, possibly a couple other apps

- Postfix - the best MTA out there 🙂

- I believe amavisd/clamav/etc. was involved for mail scanning

- Integration with mailman, of course

- MySQL - 5.0.x, using MyISAM tables by default, I don't believe things were converted to InnoDB

- rsync - mirrors were pulling using the rsync protocol

The provider

- SoftLayer - they just rock. Not a paid placement. 🙂

The stats

nginx memory usage

During some of that time... only needed 60 megs of physical RAM. 240 megs including virtual. At 2200+ concurrent connections... eat that, Apache.

root 13023 0.0 0.0 39684 2144 ? Ss 03:56 0:00 nginx: master process /usr/sbin/nginx

www-data 13024 2.0 0.3 50148 14464 ? D 03:56 9:30 nginx: worker process

www-data 13025 1.1 0.3 51052 15256 ? D 03:56 5:38 nginx: worker process

www-data 13026 1.3 0.3 50760 15076 ? D 03:56 6:13 nginx: worker process

www-data 13027 1.3 0.3 50584 14900 ? D 03:56 6:22 nginx: worker process

nginx status (taken at some random point)

Active connections: 2258

server accepts handled requests

711389 711389 1483197

Reading: 2 Writing: 2040 Waiting: 216

Bandwidth (taken from the provider's switch)

Exceeded Bits Out: 1001.9 M (Threshold: 500 M)

Auto Manage Method: FCR_BLOCK

Auto Manage Result: SUCCESSFUL

Exceeded Bits Out: 868.1 M (Threshold: 500 M)

Auto Manage Method: FCR_BLOCK

Auto Manage Result: SUCCESSFUL

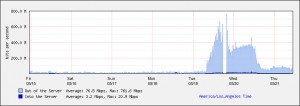

To give you an idea of the magnitude of growth, this is the amount of gigabytes the server pushes on a normal day:

- 2009-05-18 155.01 GB

- 2009-05-17 127.48 GB

- 2009-05-16 104.21 GB

- 2009-05-15 152.42 GB

- 2009-05-14 160.12 GB

- 2009-05-13 148.6 GB

On launch day and the spillover into the next day:

- 2009-05-19 2036.37 GB

- 2009-05-20 2481.87 GB

The pretty pictures

Click for larger versions!

Hourly traffic graph

(Note: I said 600M, apparently their threshhold from their router says 500M)

Weekly traffic graph

The takeaway

Normally I would never think a server could get slammed with so much while it is having to service so much. Perhaps if it was JUST a PHP/MySQL server, or JUST a static file server, but no - we had two webservers, a mailing list manager, Drupal (which is not the most optimized PHP software), etc. The server remained responsive enough to service requests, on purely commodity hardware.